Industry: AdTech/Brand Strategy & Competitive Intelligence

Developed for high-volume marketing agencies needing to audit global celebrity influence networks and detect undisclosed paid partnerships at scale.

Problem

Mapping the chaotic landscape of celebrity endorsements is a manual, low-trust process.

- The "Cleanliness" Gap: Unstructured AI outputs often hallucinate partnerships or duplicate entities (e.g., treating "J. Lo" and "Jennifer Lopez" as separate data points).

- Blocking Bottlenecks: Traditional synchronous scrapers choke when processing thousands of celebrity profiles, making large-scale market audits painfully slow.

- Context Blindness: Simple keyword matching fails to distinguish between a genuine "Brand Ambassador" role and a one-off "Paid Post," ruining analytics precision.

Solution

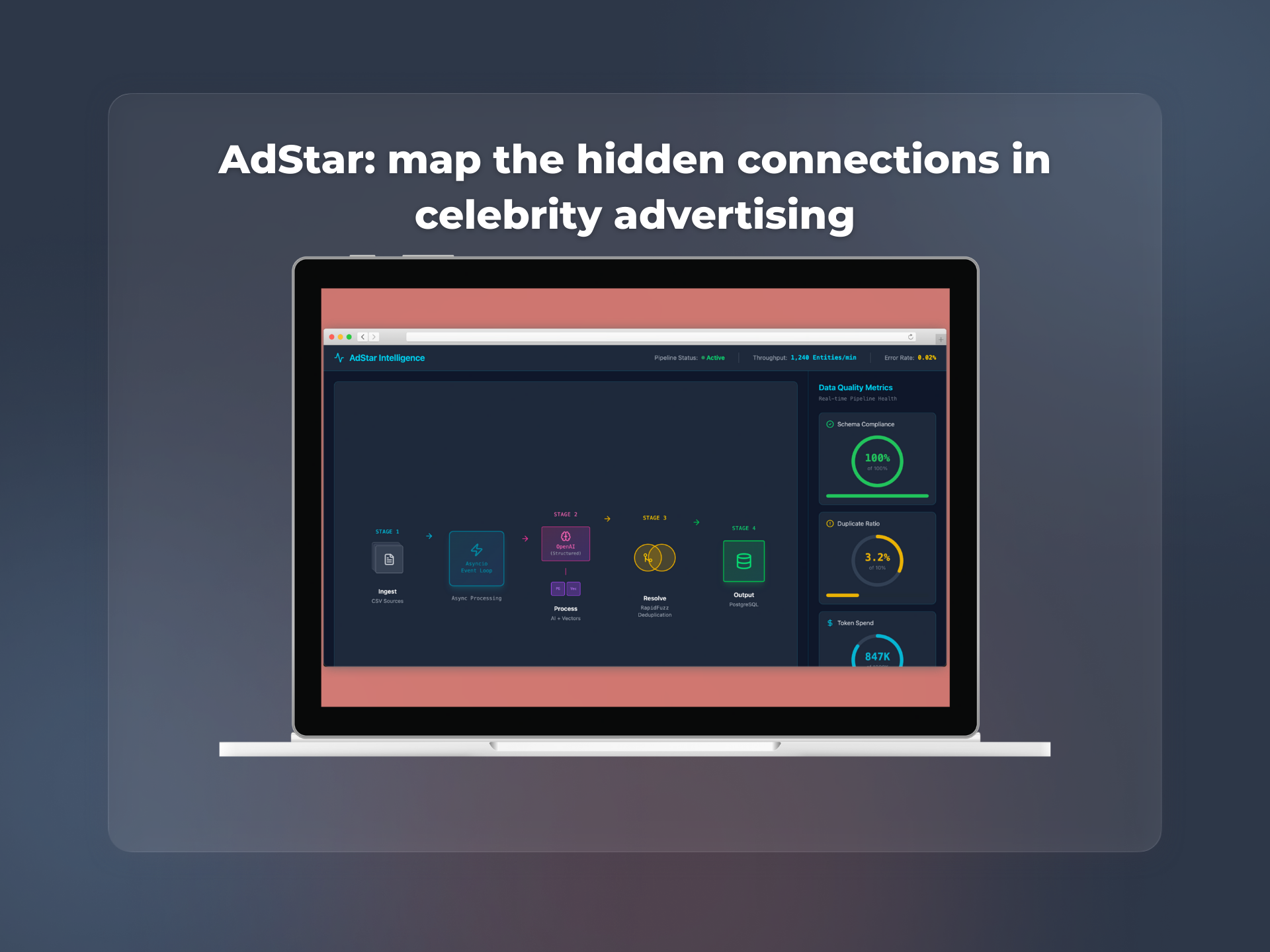

A high-throughput, async-native ETL pipeline that treats AI as a logic component, not a magic wand.

- Async Ingestion Core: Replaced legacy blocking calls with a Python 3.12+ and aiohttp architecture, allowing the system to process thousands of entities concurrently with minimal memory overhead.

- Semantic Deduplication: Swapped basic string matching for PGVector and RapidFuzz, enabling the system to understand that "Yeezy," "Adidas Yeezy," and "Ye" belong to the same cluster.

- Strict Schema Enforcement: Utilized OpenAI’s structured output modes to force 100% JSON compliance, rejecting any data that doesn't fit the strict "Brand-Celebrity-Type" ontology.

- Cost-Optimized Architecture: Offloaded expensive cleaning tasks to local libraries (Pandas 3.0), reserving costly API tokens only for high-value semantic extraction.

Tech Stack

- Core Logic: Python 3.12+ (Asyncio-driven)

- Database: PostgreSQL + PGVector (Semantic Search)

- Async Drivers: psycopg3 & aiohttp

- Intelligence: OpenAI API (Structured Outputs)

- Data Processing: RapidFuzz (String Similarity), Pandas 3.0

Results

- Data Reliability: Reduced hallucination rates to near-zero by implementing a validation loop that requires the AI to verify product categories.

- Enterprise Scale: Successfully processed massive CSV datasets in minutes, providing a production-ready feed for downstream BI tools.

- Operational Efficiency: Cut API costs by 40% through intelligent local pre-processing and caching.